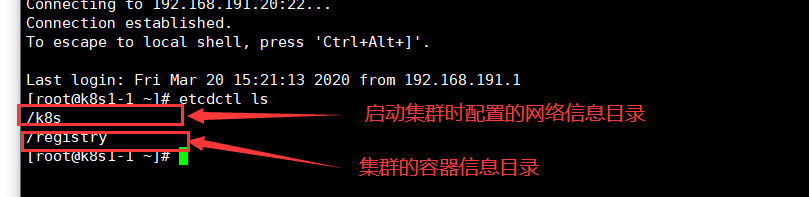

The data stored in etcd is divided into flanned network data and k8s container data.

1. Directly backup the data directory of etcd (generally used for single node)

The data of etcd will be stored in our command working directory by default. We find that the directory where the data is located will be divided into two folders:

snap: store the snapshot data. Etcd prevents the snapshots set due to too many WAL files, and stores the status of etcd data.

WAL: the most important function of pre writing log is to record the whole process of data change. In etcd, all data changes must be written to the WAL before submission.

In general, the data directory of etcd components is under / var/lib/etcd. You can directly package the files in this directory,

For the multi node etcd service, the method of directly backing up and restoring directory files cannot be used.

Before backup, use docker stop to stop the corresponding service, and then start it.

If you stop the etcd service, the service will be interrupted during the backup process.

By default, etcd will generate a snap every 10000 changes.

If you only back up the files under / var/lib/etcd/member/snap, you do not need to stop the service

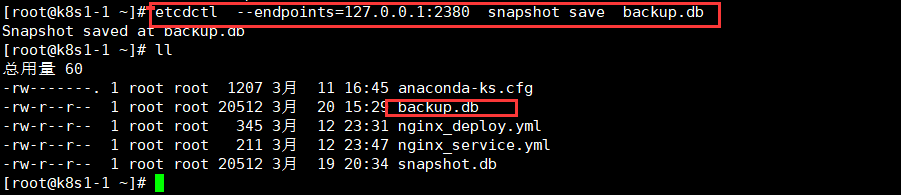

2. Use command snapshot of etcd to backup data

Data recovery

etcdctl snapshot restore backup.db --data-dir=/var/lib/etcd

3. Use cronjob of kubernetes to realize regular automatic backup.

Here, use the scheduled task provided by k8s to perform the backup task. The pod of the scheduled task and the pod of etcd should be on the same node (using nodeAffinity).

yml file (not verified)

apiVersion: batch/v2alpha1kind: CronJobmetadata: name: etcd-disaster-recovery namespace: cronspec: schedule: "0 22 * * *" jobTemplate: spec: template: metadata: labels: app: etcd-disaster-recovery spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/role operator: In values: - master containers: - name: etcd image: coreos/etcd:v3.0.17 command: - sh - -c - "export ETCDCTL_API=3; \ etcdctl --endpoints $ENDPOINT snapshot save /snapshot/$(date +%Y%m%d_%H%M%S)_snapshot.db; \ echo etcd backup sucess" env: - name: ENDPOINT value: "127.0.0.1:2379" volumeMounts: - mountPath: "/snapshot" name: snapshot subPath: data/etcd-snapshot - mountPath: /etc/localtime name: lt-config - mountPath: /etc/timezone name: tz-config restartPolicy: OnFailure volumes: - name: snapshot persistentVolumeClaim: claimName: cron-nas - name: lt-config hostPath: path: /etc/localtime - name: tz-config hostPath: path: /etc/timezone hostNetwork: true