Hello, I'm Jack.

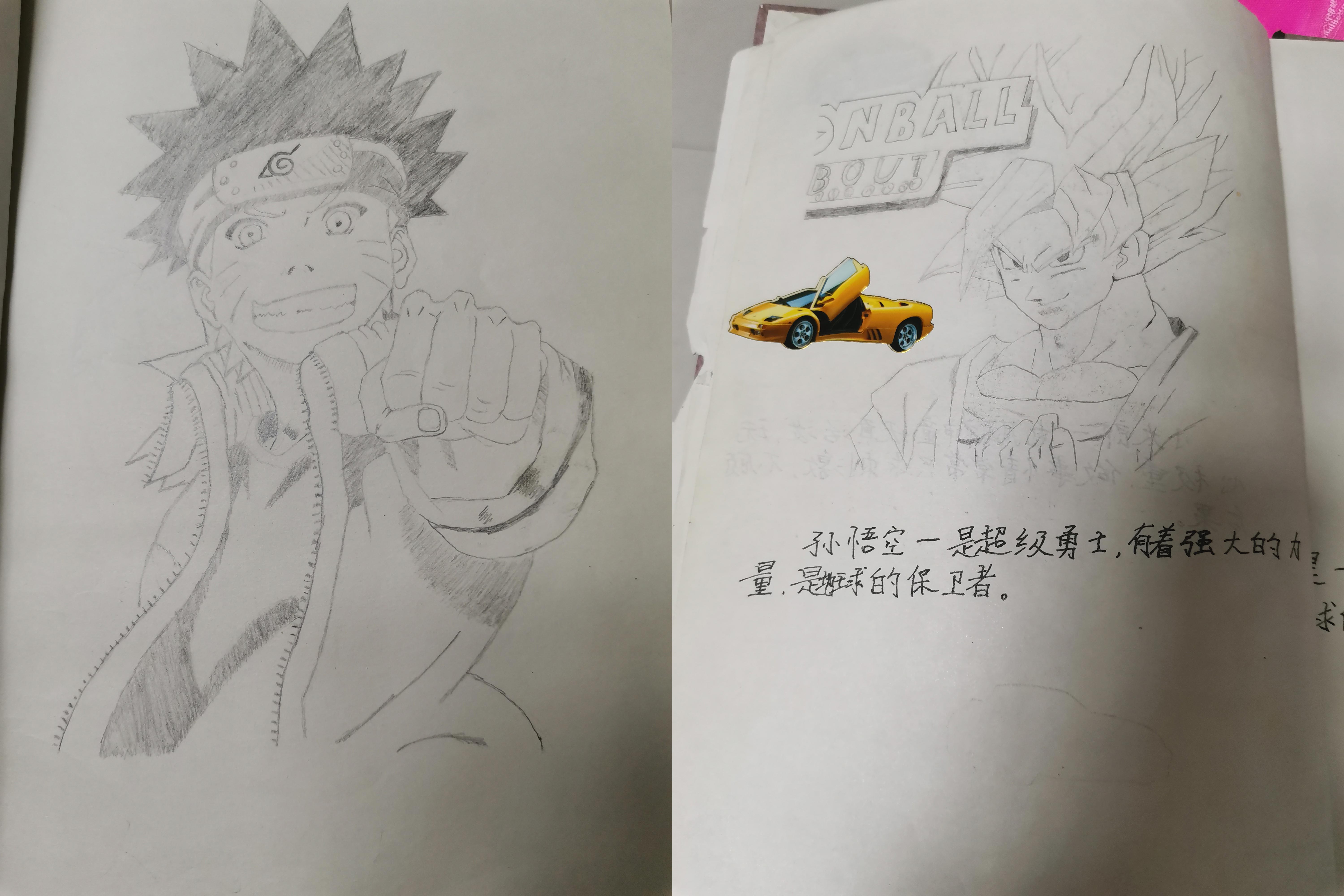

When I was a child, I was actually a little artistic. I liked watching Naruto and seven dragon beads. Although I didn't learn painting, I also drew a lot of works clumsily.

I specially asked my mother to take out the little broken book I have collected for many years and share my childhood happiness.

I can't remember what I painted in the first grade of primary school. I only remember that one painting was a small half day, and I took it to school to show off.

Now, let me pick up a pencil and draw a sketch, I can't draw it.

However, I found another way and used the algorithm. I lbw, it's not on!

Anime2Sketch

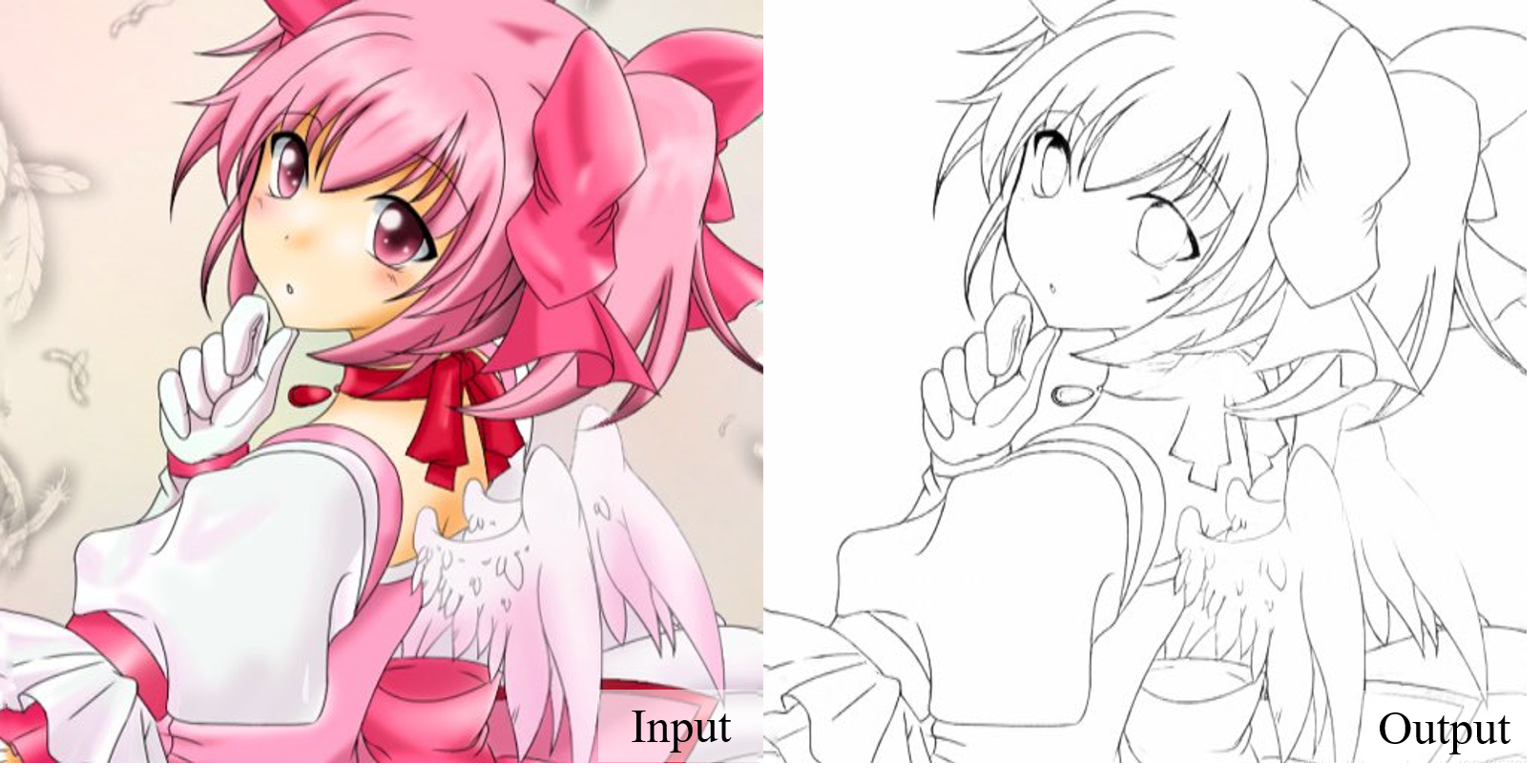

Anime2Sketch is a sketch extractor for animation, comics, illustration and other art works.

Give me a work of art and I'll turn it directly into a sketch:

Sketch works copied in 1 second:

Anime2Sketch algorithm is also very simple. It is an UNet structure. To generate sketch works, you can see its network structure:

import torch

import torch.nn as nn

import functools

class UnetGenerator(nn.Module):

"""Create a Unet-based generator"""

def __init__(self, input_nc, output_nc, num_downs, ngf=64, norm_layer=nn.BatchNorm2d, use_dropout=False):

"""Construct a Unet generator

Parameters:

input_nc (int) -- the number of channels in input images

output_nc (int) -- the number of channels in output images

num_downs (int) -- the number of downsamplings in UNet. For example, # if |num_downs| == 7,

image of size 128x128 will become of size 1x1 # at the bottleneck

ngf (int) -- the number of filters in the last conv layer

norm_layer -- normalization layer

We construct the U-Net from the innermost layer to the outermost layer.

It is a recursive process.

"""

super(UnetGenerator, self).__init__()

# construct unet structure

unet_block = UnetSkipConnectionBlock(ngf * 8, ngf * 8, input_nc=None, submodule=None, norm_layer=norm_layer, innermost=True) # add the innermost layer

for _ in range(num_downs - 5): # add intermediate layers with ngf * 8 filters

unet_block = UnetSkipConnectionBlock(ngf * 8, ngf * 8, input_nc=None, submodule=unet_block, norm_layer=norm_layer, use_dropout=use_dropout)

# gradually reduce the number of filters from ngf * 8 to ngf

unet_block = UnetSkipConnectionBlock(ngf * 4, ngf * 8, input_nc=None, submodule=unet_block, norm_layer=norm_layer)

unet_block = UnetSkipConnectionBlock(ngf * 2, ngf * 4, input_nc=None, submodule=unet_block, norm_layer=norm_layer)

unet_block = UnetSkipConnectionBlock(ngf, ngf * 2, input_nc=None, submodule=unet_block, norm_layer=norm_layer)

self.model = UnetSkipConnectionBlock(output_nc, ngf, input_nc=input_nc, submodule=unet_block, outermost=True, norm_layer=norm_layer) # add the outermost layer

def forward(self, input):

"""Standard forward"""

return self.model(input)

class UnetSkipConnectionBlock(nn.Module):

"""Defines the Unet submodule with skip connection.

X -------------------identity----------------------

|-- downsampling -- |submodule| -- upsampling --|

"""

def __init__(self, outer_nc, inner_nc, input_nc=None,

submodule=None, outermost=False, innermost=False, norm_layer=nn.BatchNorm2d, use_dropout=False):

"""Construct a Unet submodule with skip connections.

Parameters:

outer_nc (int) -- the number of filters in the outer conv layer

inner_nc (int) -- the number of filters in the inner conv layer

input_nc (int) -- the number of channels in input images/features

submodule (UnetSkipConnectionBlock) -- previously defined submodules

outermost (bool) -- if this module is the outermost module

innermost (bool) -- if this module is the innermost module

norm_layer -- normalization layer

use_dropout (bool) -- if use dropout layers.

"""

super(UnetSkipConnectionBlock, self).__init__()

self.outermost = outermost

if type(norm_layer) == functools.partial:

use_bias = norm_layer.func == nn.InstanceNorm2d

else:

use_bias = norm_layer == nn.InstanceNorm2d

if input_nc is None:

input_nc = outer_nc

downconv = nn.Conv2d(input_nc, inner_nc, kernel_size=4,

stride=2, padding=1, bias=use_bias)

downrelu = nn.LeakyReLU(0.2, True)

downnorm = norm_layer(inner_nc)

uprelu = nn.ReLU(True)

upnorm = norm_layer(outer_nc)

if outermost:

upconv = nn.ConvTranspose2d(inner_nc * 2, outer_nc,

kernel_size=4, stride=2,

padding=1)

down = [downconv]

up = [uprelu, upconv, nn.Tanh()]

model = down + [submodule] + up

elif innermost:

upconv = nn.ConvTranspose2d(inner_nc, outer_nc,

kernel_size=4, stride=2,

padding=1, bias=use_bias)

down = [downrelu, downconv]

up = [uprelu, upconv, upnorm]

model = down + up

else:

upconv = nn.ConvTranspose2d(inner_nc * 2, outer_nc,

kernel_size=4, stride=2,

padding=1, bias=use_bias)

down = [downrelu, downconv, downnorm]

up = [uprelu, upconv, upnorm]

if use_dropout:

model = down + [submodule] + up + [nn.Dropout(0.5)]

else:

model = down + [submodule] + up

self.model = nn.Sequential(*model)

def forward(self, x):

if self.outermost:

return self.model(x)

else: # add skip connections

return torch.cat([x, self.model(x)], 1)

def create_model(gpu_ids=[]):

"""Create a model for anime2sketch

hardcoding the options for simplicity

"""

norm_layer = functools.partial(nn.InstanceNorm2d, affine=False, track_running_stats=False)

net = UnetGenerator(3, 1, 8, 64, norm_layer=norm_layer, use_dropout=False)

ckpt = torch.load('weights/netG.pth')

for key in list(ckpt.keys()):

if 'module.' in key:

ckpt[key.replace('module.', '')] = ckpt[key]

del ckpt[key]

net.load_state_dict(ckpt)

if len(gpu_ids) > 0:

assert(torch.cuda.is_available())

net.to(gpu_ids[0])

net = torch.nn.DataParallel(net, gpu_ids) # multi-GPUs

return netUNet should be very familiar, so I won't introduce it more.

Project address: https://github.com/Mukosame/Anime2Sketch

Environment deployment is also very simple. You only need to install the following three libraries:

torch>=0.4.1 torchvision>=0.2.1 Pillow>=6.0.0

Then download the weight file.

The weight file is placed on Google drive. For your convenience, I have packed the code, weight file and some test images.

Directly download and run (extraction code: a7r4):

https://pan.baidu.com/s/1h6bqgphqUUjj4fz61Y9HCA

Enter the project root directory and directly run the command:

python3 test.py --dataroot test_samples --load_size 512 --output_dir results

Operation effect:

"Painting" is very fast. I found some pictures on the Internet for testing.

Naruto and Dai Tu:

Conan and ash Hara:

Ramble

Before using the algorithm:

Such a sketch has no soul!

After using the algorithm:

I took some pictures of real people and tested them. It was found that the effect was very poor. Sure enough, the lines of real people were still more complex.