1, Production crime scene and troubleshooting process

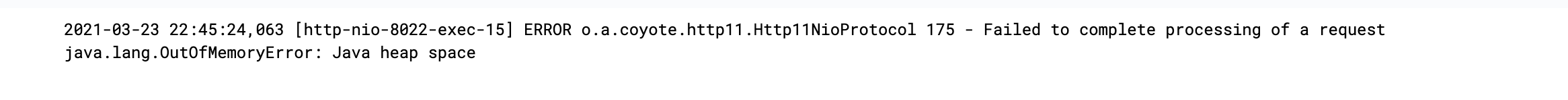

At 22:46:06 p.m. on March 23, the front service hung up and gave an alarm. Check the system log:

You can see the stack overflow.

I guess it's related to a new configuration in the afternoon:

server.maxHttpHeaderSize: 10240000

(roll back the image and resume production...)

Guess belongs to guess. We still have to show some evidence.

jvm parameters corresponding to the service:

java -Xms1024m -Xmx1024m -XX:MetaspaceSize=512m -XX:MaxMetaspaceSize=512m -XX:SoftRefLRUPolicyMSPerMB=5000 -XX:+PrintGCDetails -Xloggc:/data/logs/saas-front/log/gc/gc.log -XX:+HeapDumpOnOutOfMemoryError -XX:HeapDumpPath=/data/logs/saas-front/log/oom -javaagent:/usr/local/skywalking/skywalking-agent/skywalking-agent.jar -Dskywalking.agent.service_name=saas-front-aws_us_west_2 -jar saas-front.jar --spring.profiles.active=aws_us_west_2

So you can go to the / data / logs / SaaS front / log / OOM directory to get the snapshot after OOM and use the Eclipse Memory Analyzer tool for analysis.

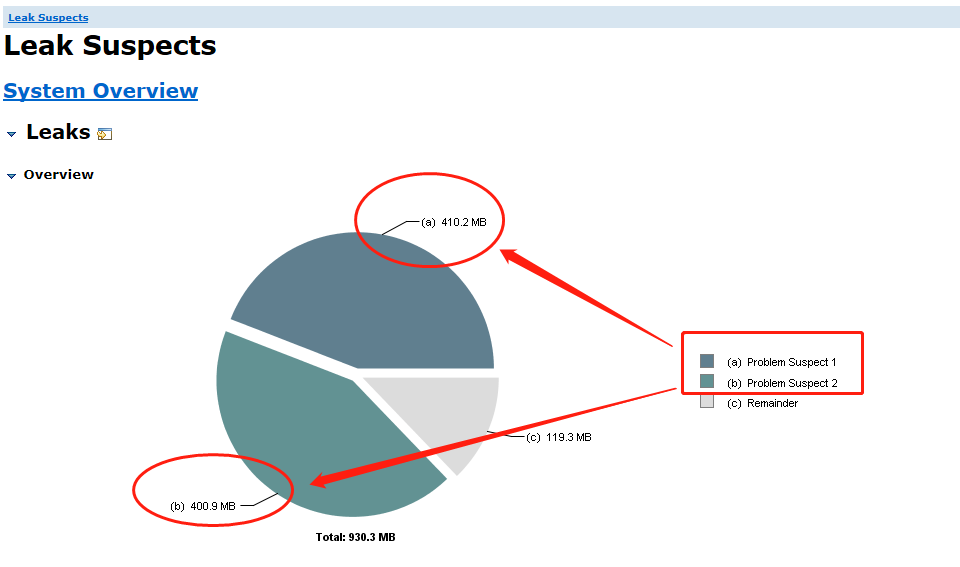

Soon, you can find two overflow points by checking Leak Suspects:

Problem Suspect 1:

42 instances of "org.apache.coyote.http11.Http11OutputBuffer", loaded by "sun.misc.Launcher$AppClassLoader @ 0xc0015438" occupy 430,106,544 (44.09%) bytes. Biggest instances: org.apache.coyote.http11.Http11OutputBuffer @ 0xc5860360 - 10,240,632 (1.05%) bytes. org.apache.coyote.http11.Http11OutputBuffer @ 0xc586b6b0 - 10,240,632 (1.05%) bytes. org.apache.coyote.http11.Http11OutputBuffer @ 0xc7b733a0 - 10,240,632 (1.05%) bytes. org.apache.coyote.http11.Http11OutputBuffer @ 0xca055528 - 10,240,632 (1.05%) bytes. org.apache.coyote.http11.Http11OutputBuffer @ 0xccc2d290 - 10,240,632 (1.05%) bytes. org.apache.coyote.http11.Http11OutputBuffer @ 0xcd5f51f0 - 10,240,632 (1.05%) bytes. ......

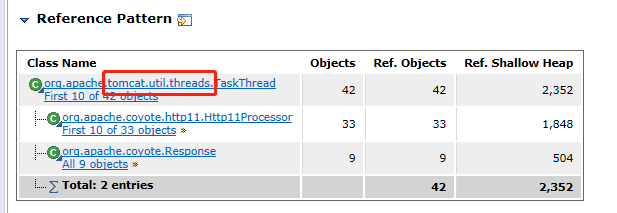

There are 42 Http11OutputBuffer instances, each occupying 10M, and a total of 420M (44.09%) of heap space.

It can be seen that it is the space occupied by tomcat threads.

Problem Suspect 2:

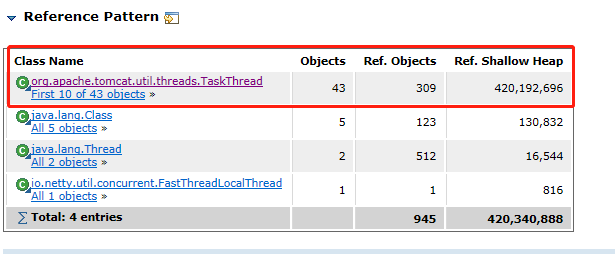

945 instances of "byte[]", loaded by "<system class loader>" occupy 420,340,888 (43.09%) bytes. Biggest instances: byte[10248192] @ 0xc4bc2c10 GET / HTTP/1.1..host:honedora.comm..x-real-ip:43.245.160.455..x-forwarded-for:43.245.160.455..user-agent:Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.108 Safari/537.366..accept-language:en-US,en;q=0.55..... - 10,248,208 (1.05%) bytes. byte[10248192] @ 0xc70776d8 GET / HTTP/1.1..host:thenicegoods.comm..x-real-ip:43.245.160.455..x-forwarded-for:43.245.160.455..user-agent:Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.108 Safari/537.366..accept-language:en-US,en;q=0.... - 10,248,208 (1.05%) bytes. byte[10248192] @ 0xc7b7bc00 GET /prometheus HTTP/1.1..host:172.30.2.144:180222..user-agent:Prometheus/2.17.11..accept:application/openmetrics-text; version=0.0.1,text/plain;version=0.0.4;q=0.5,*/*;q=0.11..accept-encoding:gzipp..x-prometheus-scrape-timeout-seconds:10.0000000............. - 10,248,208 (1.05%) bytes. byte[10248192] @ 0xc943e7e8 GET //website/wp-includes/wlwmanifest.xml HTTP/1.1..host:cattyy.comm..x-real-ip:43.245.160.455..x-forwarded-for:43.245.160.455..cookie:client_id=5718685622856744966..user-agent:Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko... - 10,248,208 (1.05%) bytes. ......

There are more than 945 instances in which there are more than 400M instances.

Here is also the space occupied by tomcat threads.

Basically, it can be determined that there is a problem with the maximum request header information size configured by tomcat.

2, Why did you set this parameter - maxHttpHeaderSize

Previously, I found that when visiting a product link page, I reported 500:

To view the error log of the service:

10:29:08.901 user [http-nio-8022-exec-9] ERROR o.a.coyote.http11.Http11Processor - Error processing request org.apache.coyote.http11.HeadersTooLargeException: An attempt was made to write more data to the response headers than there was room available in the buffer. Increase maxHttpHeaderSize on the connector or write less data into the response headers. at org.apache.coyote.http11.Http11OutputBuffer.checkLengthBeforeWrite(Http11OutputBuffer.java:464) at org.apache.coyote.http11.Http11OutputBuffer.write(Http11OutputBuffer.java:417) at org.apache.coyote.http11.Http11OutputBuffer.write(Http11OutputBuffer.java:403) at org.apache.coyote.http11.Http11OutputBuffer.sendHeader(Http11OutputBuffer.java:363) at org.apache.coyote.http11.Http11Processor.prepareResponse(Http11Processor.java:975) at org.apache.coyote.AbstractProcessor.action(AbstractProcessor.java:369) at org.apache.coyote.Response.action(Response.java:211) at org.apache.coyote.Response.sendHeaders(Response.java:437) at org.apache.catalina.connector.OutputBuffer.doFlush(OutputBuffer.java:281) at org.apache.catalina.connector.OutputBuffer.close(OutputBuffer.java:241) at org.apache.catalina.connector.Response.finishResponse(Response.java:440) at org.apache.catalina.connector.CoyoteAdapter.service(CoyoteAdapter.java:374) at org.apache.coyote.http11.Http11Processor.service(Http11Processor.java:408) at org.apache.coyote.AbstractProcessorLight.process(AbstractProcessorLight.java:66) at org.apache.coyote.AbstractProtocol$ConnectionHandler.process(AbstractProtocol.java:853) at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.doRun(NioEndpoint.java:1587) at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:49) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61) at java.lang.Thread.run(Thread.java:748)

An error message indicates that the buffer space of the request header is not enough. It means that you can increase the value of maxHttpHeaderSize or reduce the write data.

My solution at that time:

1. According to the Internet, springboot can directly configure server maxHttpHeaderSize = 10M

2. Later, the URL with too large write response header was checked, and unnecessary writing was reduced by modifying the code logic

There is an interface www.baidu.com COM / products / shoe, the interface execution process is

① Query goods

② Interceptor interception settings store general model

③ Determine whether the link needs to be redirected according to some logic

-true redirection

-false returns and jumps to the product details page

As a result of redirection, the model will splice a large number of parameters behind the link

www.baidu. com/products/new-shoe? Cardconfig = * * * & name = * * * & customcodelist = * * * & devicetype = * * * omit

In addition, Location = the redirect url above will be set in the response header to tell the browser to redirect and jump. (here is the reason for the error report)

Returning to the product details page, the model will be used for page display or page js logic judgment.

So I made a judgment in the interceptor. If it belongs to redirection, then I don't set the model.

Then ① and ② are revised and put online. Before long, the previous OOM appears...

3, Let's talk about maxHttpHeaderSize

The maxHttpHeaderSize parameter is a parameter belonging to the tomcat server, which is 8k by default. The default configuration of the built-in tomcat in springboot is 8k.

On tomcat's server maxHttpHeaderSize can also be set in XML #.

<Connector port="8099" protocol="HTTP/1.1" maxHttpHeaderSize="8192" connectionTimeout="20000" redirectPort="8443" />

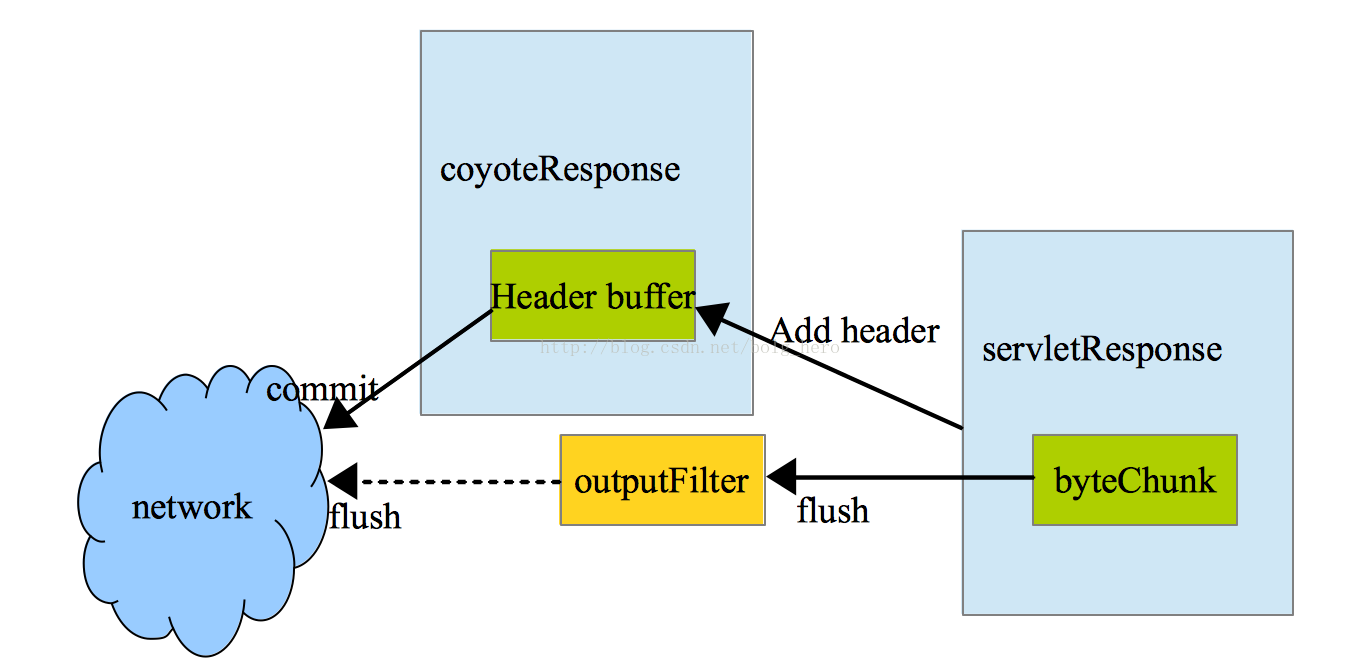

Tomcat implements the response and request in the servlet specification, and there will be a Buffer to cache the httpHeader information.

As shown in the figure below, the Header Buffer of coyoteResponse controls the storage of httpheader s.

This Header Buffer cannot be expanded. When tomcat starts, its fixed size (8192 bytes by default) will be set. Neither the output nor input of the request header can exceed this size.

The fixed size is configured by maxHttpHeaderSize.

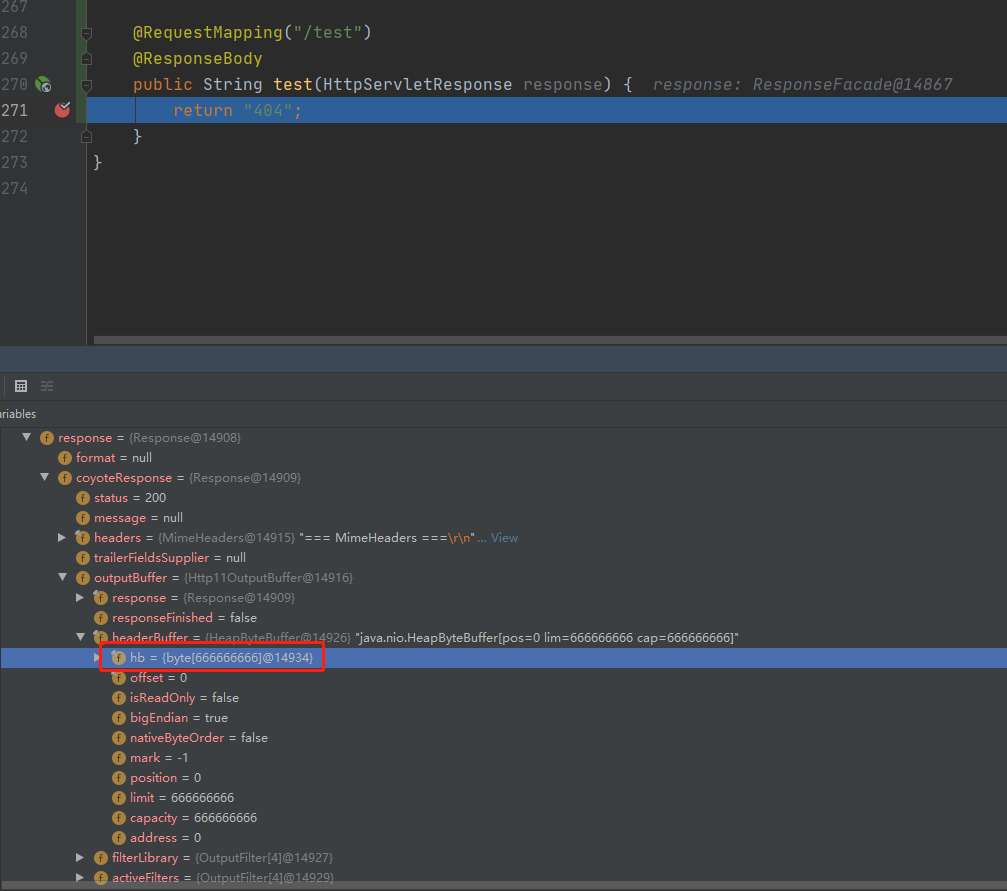

We can verify it with an example,

springboot configuration

server.max-http-header-size: 666666666

Write a test interface and Debug to check:

You can see that there is indeed a headerBuffer object under coyoteResponse, which has an hb attribute, which is a byte array with a size of 666

4, Summary

The object shown in the previous stack information is org apache. coyote. http11. Http11outputbuffer @ 0xc5860360, also corresponding

For Header Buffer here, each tomcat user thread will apply for a Header Buffer space.

When there are many concurrent threads in the website, the memory space is occupied relatively large, resulting in that new threads cannot apply for memory space, and finally OOM appears.

The maxHttpHeaderSize parameter cannot be set too large. It can be set enough according to the project situation. Full consideration should also be given to setting the size and number of request headers and whether there is room for optimization.