Record the code.

Standard Hough transform HoughLines() HoughLines(midImage, lines, 1, CV_PI/180, 150, 0, 0 );

HoughLines(midImage, lines, 1, CV_PI/180, 150, 0, 0 );

The function input image is 8-bit single channel binary

After calling HoughLines function, the output vector of the line detected by Hough line transformation is stored. Each line consists of a vector with two elements( ρ,θ) Means, where, ρ Is the distance from the coordinate origin (0, 0) (i.e. the upper left corner of the image), θ Is the rotation angle of the arc line (0 degrees represents the vertical line and π / 2 degrees represents the horizontal line). The third parameter, rho of double type, is the distance accuracy in pixels. Another expression is the unit radius of progressive dimension in line search. (in Latex, / rho means ρ) The fourth parameter, theta of double type, is the angular accuracy in radians. Another expression is the unit angle of progressive dimension in line search. The fifth parameter, threshold of int type, is the threshold parameter of the accumulation plane, that is, the value that must be reached in the accumulation plane when identifying a part as a straight line in the graph. Only segments greater than the threshold can be detected and returned to the result.

#include "opencv2/core.hpp"

#include <opencv2/imgproc.hpp>

#include "opencv2/video.hpp"

#include "opencv2/videoio.hpp"

#include "opencv2/highgui.hpp"

#include"opencv2/imgproc/imgproc.hpp"

#include<opencv2/opencv.hpp>

#include <iostream>

using namespace std;

using namespace cv;

int main()

{

//[1] Load original drawing and Mat variable definition

Mat srcImage = imread("/home/heziyi/picture/6.jpg"); //There should be a sheet named 1.0 under the project directory Material drawing of jpg

Mat midImage,dstImage;//Definition of temporary variables and target graph

//[2] Edge detection and conversion to gray image

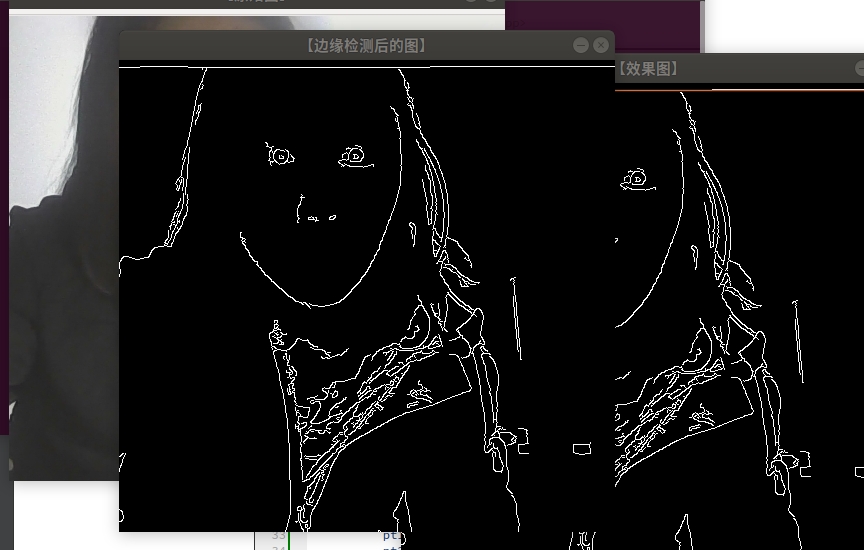

Canny(srcImage, midImage, 50, 200, 3);//Perform a canny edge detection

cvtColor(midImage,dstImage, COLOR_GRAY2BGR);//The image after edge detection is transformed into gray image

//[3] Perform Hough line transformation

vector<Vec2f> lines;//A vector structure lines is defined to store the obtained line segment vector set

HoughLines(midImage, lines, 1, CV_PI/180, 150, 0, 0 );

for( size_t i = 0; i < lines.size(); i++ )

{

float rho = lines[i][0], theta = lines[i][1];

Point pt1, pt2;

double a = cos(theta), b = sin(theta);

double x0 = a*rho, y0 = b*rho;

pt1.x = cvRound(x0 + 1000*(-b));

pt1.y = cvRound(y0 + 1000*(a));

pt2.x = cvRound(x0 - 1000*(-b));

pt2.y = cvRound(y0 - 1000*(a));

//The OpenCV2 version of this code is:

//line( dstImage, pt1, pt2, Scalar(55,100,195), 1, CV_AA);

//The OpenCV3 version of this code is:

line( dstImage, pt1, pt2, Scalar(55,100,195), 1, LINE_AA);

}

//[5] Show original drawing

imshow("[[original drawing]", srcImage);

//[6] Image after edge detection

imshow("[Figure after edge detection]", midImage);

//[7] Display renderings

imshow("[Effect drawing]", dstImage);

waitKey(0);

}

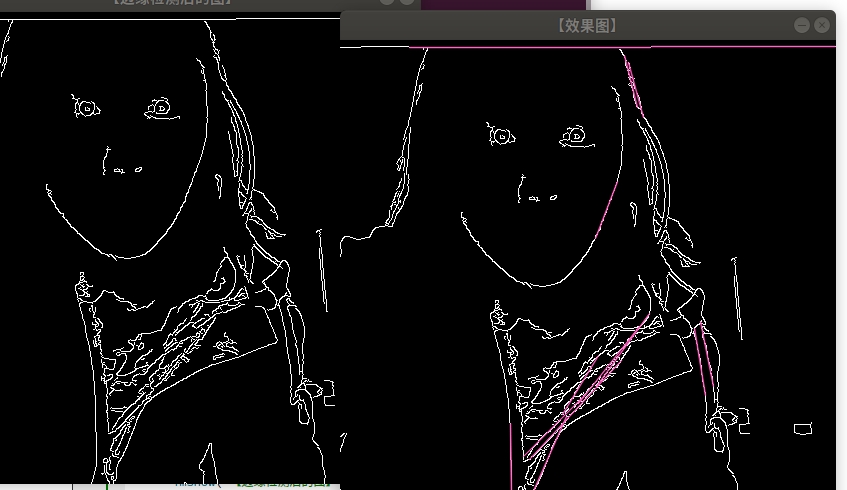

Cumulative probability Hough transform: HoughLinesP() function

On the basis of HoughLines, this function adds a P representing Probabilistic (probability) at the end, indicating that it can use the cumulative probability Hough transform (PPHT) to find the straight line in the binary image. C++: void HoughLinesP(InputArray image, OutputArray lines, double rho, double theta, int threshold, double minLineLength=0, double maxLineGap=0) the first parameter, InputArray type image, input image, i.e. source image. It needs to be an 8-bit single channel binary image. You can load any source image, modify it to this format by the function, and then fill it here. The second parameter, lines of InputArray type, stores the output vector of the detected line after calling HoughLinesP function. Each line is represented by a vector with four elements (x_1, y_1, x_2, y_2), where, (x_1, y_1) and (x_2, y_2) are the end points of each detected line segment. The third parameter, Rho of double type, is the distance accuracy in pixels. Another expression is the unit radius of progressive dimension in line search. The fourth parameter, theta of double type, is the angular accuracy in radians. Another expression is the unit angle of progressive dimension in line search. The fifth parameter, threshold of int type, is the threshold parameter of the accumulation plane, that is, the value that must be reached in the accumulation plane when identifying a part as a straight line in the graph. Only segments greater than the threshold can be detected and returned to the result. The sixth parameter, double type minLineLength, has a default value of 0, indicating the length of the lowest line segment. The line segment shorter than this setting parameter cannot be displayed. The seventh parameter, maxLineGap of double type, has a default value of 0, which allows the maximum distance between points in the same line.

#include "opencv2/core.hpp"

#include <opencv2/imgproc.hpp>

#include "opencv2/video.hpp"

#include "opencv2/videoio.hpp"

#include "opencv2/highgui.hpp"

#include"opencv2/imgproc/imgproc.hpp"

#include<opencv2/opencv.hpp>

#include <iostream>

using namespace std;

using namespace cv;

int main()

{

//[1] Load original drawing and Mat variable definition

Mat srcImage = imread("/home/heziyi/picture/6.jpg"); //There should be a sheet named 1.0 under the project directory Jpg material map

Mat midImage,dstImage;//Definition of temporary variables and target graph

//[2] Edge detection and conversion to gray image

Canny(srcImage, midImage, 50, 200, 3);//Perform a canny edge detection

cvtColor(midImage,dstImage, COLOR_GRAY2BGR);//The image after edge detection is transformed into gray image

//[3] Perform Hough line transformation

vector<Vec4i> lines;//A vector structure lines is defined to store the obtained line segment vector set

HoughLinesP(midImage, lines, 1, CV_PI/180, 80, 50, 10 );

//[4] Draw each line segment in the figure in turn

for( size_t i = 0; i < lines.size(); i++ )

{

Vec4i l = lines[i];

//The OpenCV2 version of this code is:

//line( dstImage, Point(l[0], l[1]), Point(l[2], l[3]), Scalar(186,88,255), 1, CV_AA);

//The OpenCV3 version of this code is:

line( dstImage, Point(l[0], l[1]), Point(l[2], l[3]), Scalar(186,88,255), 1, LINE_AA);

}

//[5] Show original drawing

imshow("[[original drawing]", srcImage);

//[6] Image after edge detection

imshow("[Figure after edge detection]", midImage);

//[7] Display effect diagram

imshow("[Effect drawing]", dstImage);

waitKey(0);

}

There is no straight line in the original drawing, and the detection effect is not obvious.

The findContours() function is used to find contours in binary images. C + +: void findcircuits (inputoutputarray image, outputarrayofarrays contour, OutputArray hierarchy, int mode, int method, point offset = point()) the first parameter, InputArray type image, input image, i.e. source image, can be filled in the object of Mat class, and it needs to be an 8-bit single channel image. The third parameter, OutputArray type hierarchy, optional output vector, contains the topological information of the image. As a representation of the number of contours, it contains many elements. Each contour contour [i] corresponds to four hierarchy elements hierarchy[i][0] ~ hierarchy[i][3], representing the index number of the next contour, the previous contour, the parent contour and the embedded contour respectively. If there is no corresponding item, the corresponding hierarchy[i] value is set to negative. The fourth parameter, mode of type int, and the fifth parameter, method of type int, are approximate methods of contour,

vector<vector> contours;

findContours(image,

contours, / / Contour array

CV_RETR_EXTERNAL, / / get the outer contour

CV_CHAIN_APPROX_NONE); // Gets each pixel of each contour

example:

#include "opencv2/core.hpp"

#include <opencv2/imgproc.hpp>

#include "opencv2/video.hpp"

#include "opencv2/videoio.hpp"

#include "opencv2/highgui.hpp"

#include"opencv2/imgproc/imgproc.hpp"

#include<opencv2/opencv.hpp>

#include <iostream>

using namespace std;

using namespace cv;

int main()

{

//[1] Load original drawing and Mat variable definition

Mat srcImage = imread("/home/heziyi/picture/6.jpg"); //There should be a sheet named 1.0 under the project directory Material drawing of jpg

imshow("Original drawing",srcImage);

//[2] Initialization result diagram

Mat dstImage;

Mat midImage;

//[3] srcImage takes the part greater than the threshold 119

srcImage = srcImage > 119;

imshow( "Original graph after threshold", srcImage );

//[4] Define profiles and hierarchies

vector<vector<Point> > contours;

vector<Vec4i> hierarchy;

//[5] Find profile

//The OpenCV2 version of this code is:

//findContours( srcImage, contours, hierarchy,CV_RETR_CCOMP, CV_CHAIN_APPROX_SIMPLE );

//The OpenCV3 version of this code is:

// Canny(srcImage, midImage, 50, 200, 3);// Perform a canny edge detection

cvtColor(srcImage,dstImage, COLOR_BGR2GRAY);//The image after edge detection is transformed into gray image

findContours( dstImage, contours, hierarchy,RETR_TREE, CHAIN_APPROX_SIMPLE );

// [6] Traverse the contours of all top layers and draw the color of each connected component in random colors

int index = 0;

for( ; index >= 0; index = hierarchy[index][0] )

{

Scalar color( rand()&255, rand()&255, rand()&255 );

//The OpenCV2 version of this code is:

//drawContours( dstImage, contours, index, color, CV_FILLED, 8, hierarchy );

//The OpenCV3 version of this code is:

drawContours( dstImage, contours, index, color, FILLED, 8, hierarchy ); }

//[7] Show the last outline

imshow( "Outline drawing", dstImage );

waitKey(0);

}

grabcut function (official)

#include "opencv2/imgcodecs.hpp"

#include "opencv2/highgui.hpp"

#include "opencv2/imgproc.hpp"

#include <iostream>

using namespace std;

using namespace cv;

static void help()

{

cout << "\nThis program demonstrates GrabCut segmentation -- select an object in a region\n"

"and then grabcut will attempt to segment it out.\n"

"Call:\n"

"./grabcut <image_name>\n"

"\nSelect a rectangular area around the object you want to segment\n" <<

"\nHot keys: \n"

"\tESC - quit the program\n"

"\tr - restore the original image\n"

"\tn - next iteration\n"

"\n"

"\tleft mouse button - set rectangle\n"

"\n"

"\tCTRL+left mouse button - set GC_BGD pixels\n"

"\tSHIFT+left mouse button - set GC_FGD pixels\n"

"\n"

"\tCTRL+right mouse button - set GC_PR_BGD pixels\n"

"\tSHIFT+right mouse button - set GC_PR_FGD pixels\n" << endl;

}

const Scalar RED = Scalar(0,0,255);

const Scalar PINK = Scalar(230,130,255);

const Scalar BLUE = Scalar(255,0,0);

const Scalar LIGHTBLUE = Scalar(255,255,160);

const Scalar GREEN = Scalar(0,255,0);

const int BGD_KEY = EVENT_FLAG_CTRLKEY;

const int FGD_KEY = EVENT_FLAG_SHIFTKEY;

static void getBinMask( const Mat& comMask, Mat& binMask )

{

if( comMask.empty() || comMask.type()!=CV_8UC1 )

CV_Error( Error::StsBadArg, "comMask is empty or has incorrect type (not CV_8UC1)" );

if( binMask.empty() || binMask.rows!=comMask.rows || binMask.cols!=comMask.cols )

binMask.create( comMask.size(), CV_8UC1 );

binMask = comMask & 1;

}

class GCApplication

{

public:

enum{ NOT_SET = 0, IN_PROCESS = 1, SET = 2 };

static const int radius = 2;

static const int thickness = -1;

void reset();

void setImageAndWinName( const Mat& _image, const string& _winName );

void showImage() const;

void mouseClick( int event, int x, int y, int flags, void* param );

int nextIter();

int getIterCount() const { return iterCount; }

private:

void setRectInMask();

void setLblsInMask( int flags, Point p, bool isPr );

const string* winName;

const Mat* image;

Mat mask;

Mat bgdModel, fgdModel;

uchar rectState, lblsState, prLblsState;

bool isInitialized;

Rect rect;

vector<Point> fgdPxls, bgdPxls, prFgdPxls, prBgdPxls;

int iterCount;

};

void GCApplication::reset()

{

if( !mask.empty() )

mask.setTo(Scalar::all(GC_BGD));

bgdPxls.clear(); fgdPxls.clear();

prBgdPxls.clear(); prFgdPxls.clear();

isInitialized = false;

rectState = NOT_SET;

lblsState = NOT_SET;

prLblsState = NOT_SET;

iterCount = 0;

}

void GCApplication::setImageAndWinName( const Mat& _image, const string& _winName )

{

if( _image.empty() || _winName.empty() )

return;

image = &_image;

winName = &_winName;

mask.create( image->size(), CV_8UC1);

reset();

}

void GCApplication::showImage() const

{

if( image->empty() || winName->empty() )

return;

Mat res;

Mat binMask;

if( !isInitialized )

image->copyTo( res );

else

{

getBinMask( mask, binMask );

image->copyTo( res, binMask );

}

vector<Point>::const_iterator it;

for( it = bgdPxls.begin(); it != bgdPxls.end(); ++it )

circle( res, *it, radius, BLUE, thickness );

for( it = fgdPxls.begin(); it != fgdPxls.end(); ++it )

circle( res, *it, radius, RED, thickness );

for( it = prBgdPxls.begin(); it != prBgdPxls.end(); ++it )

circle( res, *it, radius, LIGHTBLUE, thickness );

for( it = prFgdPxls.begin(); it != prFgdPxls.end(); ++it )

circle( res, *it, radius, PINK, thickness );

if( rectState == IN_PROCESS || rectState == SET )

rectangle( res, Point( rect.x, rect.y ), Point(rect.x + rect.width, rect.y + rect.height ), GREEN, 2);

imshow( *winName, res );

}

void GCApplication::setRectInMask()

{

CV_Assert( !mask.empty() );

mask.setTo( GC_BGD );

rect.x = max(0, rect.x);

rect.y = max(0, rect.y);

rect.width = min(rect.width, image->cols-rect.x);

rect.height = min(rect.height, image->rows-rect.y);

(mask(rect)).setTo( Scalar(GC_PR_FGD) );

}

void GCApplication::setLblsInMask( int flags, Point p, bool isPr )

{

vector<Point> *bpxls, *fpxls;

uchar bvalue, fvalue;

if( !isPr )

{

bpxls = &bgdPxls;

fpxls = &fgdPxls;

bvalue = GC_BGD;

fvalue = GC_FGD;

}

else

{

bpxls = &prBgdPxls;

fpxls = &prFgdPxls;

bvalue = GC_PR_BGD;

fvalue = GC_PR_FGD;

}

if( flags & BGD_KEY )

{

bpxls->push_back(p);

circle( mask, p, radius, bvalue, thickness );

}

if( flags & FGD_KEY )

{

fpxls->push_back(p);

circle( mask, p, radius, fvalue, thickness );

}

}

void GCApplication::mouseClick( int event, int x, int y, int flags, void* )

{

// TODO add bad args check

switch( event )

{

case EVENT_LBUTTONDOWN: // set rect or GC_BGD(GC_FGD) labels

{

bool isb = (flags & BGD_KEY) != 0,

isf = (flags & FGD_KEY) != 0;

if( rectState == NOT_SET && !isb && !isf )

{

rectState = IN_PROCESS;

rect = Rect( x, y, 1, 1 );

}

if ( (isb || isf) && rectState == SET )

lblsState = IN_PROCESS;

}

break;

case EVENT_RBUTTONDOWN: // set GC_PR_BGD(GC_PR_FGD) labels

{

bool isb = (flags & BGD_KEY) != 0,

isf = (flags & FGD_KEY) != 0;

if ( (isb || isf) && rectState == SET )

prLblsState = IN_PROCESS;

}

break;

case EVENT_LBUTTONUP:

if( rectState == IN_PROCESS )

{

rect = Rect( Point(rect.x, rect.y), Point(x,y) );

rectState = SET;

setRectInMask();

CV_Assert( bgdPxls.empty() && fgdPxls.empty() && prBgdPxls.empty() && prFgdPxls.empty() );

showImage();

}

if( lblsState == IN_PROCESS )

{

setLblsInMask(flags, Point(x,y), false);

lblsState = SET;

showImage();

}

break;

case EVENT_RBUTTONUP:

if( prLblsState == IN_PROCESS )

{

setLblsInMask(flags, Point(x,y), true);

prLblsState = SET;

showImage();

}

break;

case EVENT_MOUSEMOVE:

if( rectState == IN_PROCESS )

{

rect = Rect( Point(rect.x, rect.y), Point(x,y) );

CV_Assert( bgdPxls.empty() && fgdPxls.empty() && prBgdPxls.empty() && prFgdPxls.empty() );

showImage();

}

else if( lblsState == IN_PROCESS )

{

setLblsInMask(flags, Point(x,y), false);

showImage();

}

else if( prLblsState == IN_PROCESS )

{

setLblsInMask(flags, Point(x,y), true);

showImage();

}

break;

}

}

int GCApplication::nextIter()

{

if( isInitialized )

grabCut( *image, mask, rect, bgdModel, fgdModel, 1 );

else

{

if( rectState != SET )

return iterCount;

if( lblsState == SET || prLblsState == SET )

grabCut( *image, mask, rect, bgdModel, fgdModel, 1, GC_INIT_WITH_MASK );

else

grabCut( *image, mask, rect, bgdModel, fgdModel, 1, GC_INIT_WITH_RECT );

isInitialized = true;

}

iterCount++;

bgdPxls.clear(); fgdPxls.clear();

prBgdPxls.clear(); prFgdPxls.clear();

return iterCount;

}

GCApplication gcapp;

static void on_mouse( int event, int x, int y, int flags, void* param )

{

gcapp.mouseClick( event, x, y, flags, param );

}

int main( int argc, char** argv )

{

cv::CommandLineParser parser(argc, argv, "{@input| /home/heziyi/picture/6.jpg |}");

help();

string filename = parser.get<string>("@input");

if( filename.empty() )

{

cout << "\nDurn, empty filename" << endl;

return 1;

}

Mat image = imread(samples::findFile(filename), IMREAD_COLOR);

if( image.empty() )

{

cout << "\n Durn, couldn't read image filename " << filename << endl;

return 1;

}

const string winName = "image";

namedWindow( winName, WINDOW_AUTOSIZE );

setMouseCallback( winName, on_mouse, 0 );

gcapp.setImageAndWinName( image, winName );

gcapp.showImage();

for(;;)

{

char c = (char)waitKey(0);

switch( c )

{

case '\x1b':

cout << "Exiting ..." << endl;

goto exit_main;

case 'r':

cout << endl;

gcapp.reset();

gcapp.showImage();

break;

case 'n':

int iterCount = gcapp.getIterCount();

cout << "<" << iterCount << "... ";

int newIterCount = gcapp.nextIter();

if( newIterCount > iterCount )

{

gcapp.showImage();

cout << iterCount << ">" << endl;

}

else

cout << "rect must be determined>" << endl;

break;

}

}

exit_main:

destroyWindow( winName );

return 0;

}