History of threads -- a history of blood and tears squeezed by CPU

1. Single thread manual switching (paper tape machine)

CPU utilization efficiency is low, and many people wait for people to change all kinds of paper tapes

2. Multi process batch processing (batch execution of multiple tasks)

However, if one task waits, it will also cause other tasks to wait

3. Multi process parallel processing (write the program in different memory locations and switch back and forth)

4. Multithreading (switching between different tasks within a program)

5. Fiber / CO process (green thread, user managed [not OS managed] thread)

Thread process concept

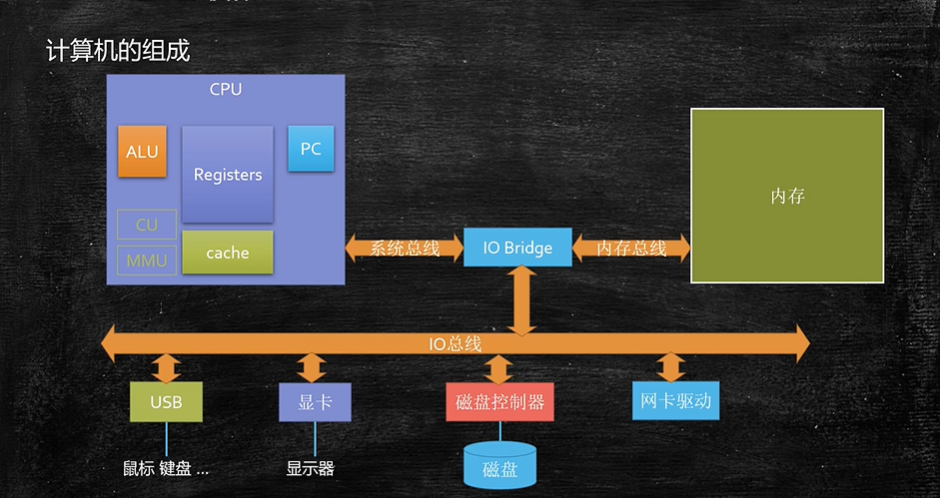

First, look at the composition of the computer above,

Procedure:

Executable file.

Process:

First, double-click a program of our computer, such as QQ.exe, which will pass through the operating system and load the current program information into the memory. There is a running QQ.exe in the memory. We can also click QQ.exe again to log in to another account. Therefore, a program can put many copies in the memory, and each copy in the memory can be called a process.

Process is the basic unit of resource allocation by the operating system. (static concept)

Thread:

Still as shown in the figure above, we said that when we click QQ.exe, our program will enter the memory and become a process when we double-click it. When we really start execution, the program will be executed in threads. The operating system will find our main thread and throw it to the CPU for execution. Then, if the main thread starts other threads, the CPU will switch back and forth in these threads.

Conceptually: thread is the basic unit of CPU scheduling execution (dynamic concept: multiple threads share all resources in the same process)

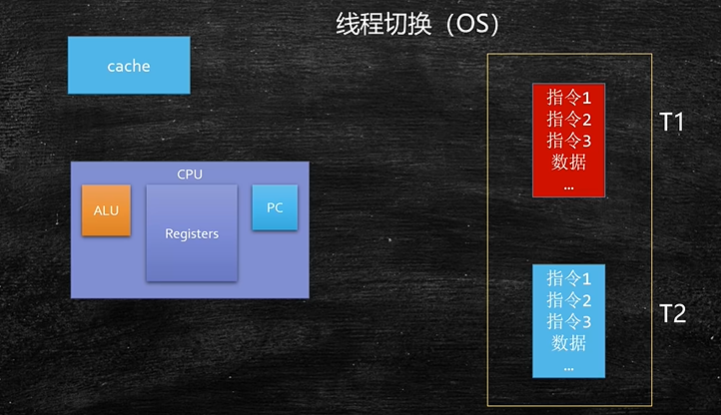

Thread switching (understanding from the bottom level):

ALU: computing unit

Registers: register group (used to store data)

PC register (stores which instruction has been executed)

Cache: cache

Therefore, when the T1 thread is to run, put the T1 data and instructions in the CPU, and then the CPU computing unit calculates it. After calculating the output, do the output, do other operations, and do other operations.

However, according to the operating system scheduling algorithm, if the T1 thread runs out of the current CPU time slice in half, it will put the T1 instructions and data in the cache, and then switch to put the T2 instructions and data in the CPU to continue the calculation. If the execution time of T2 is up, put the instructions and data of T2 into the cache, and then read the data and instructions of T1 from the cache (of course, the scheduling switching [thread context switching] of the operating system is required).

Is it meaningful to set multithreading for single core CPU?

Meaningful. For example, one of my tasks executed in the CPU needs to wait for a network response (the waiting process does not consume CPU resources). If multiple threads are set during the waiting period, you can switch to other threads to execute the task, so that you can make full use of CPU resources.

CPU intensive: a lot of time for computing.

CPU IO intensive: a lot of time waiting.

Is the number of worker threads set as large as possible?

No, this leads to CPU resource consumption on thread switching

public class Main {

//===================================================

private static double[] nums = new double[10_0000_000];

private static Random r = new Random();

private static DecimalFormat df = new DecimalFormat("0.00");

static {

for (int i = 0; i < nums.length; i++) {

nums[i] = r.nextDouble();

}

}

private static void m1() {

long start = System.currentTimeMillis();

double result = 0.0;

for (int i = 0; i < nums.length; i++) {

result += nums[i];

}

long end = System.currentTimeMillis();

System.out.println("m1: " + (end - start) + " result = " + df.format(result));

}

//=======================================================

static double result1 = 0.0, result2 = 0.0, result = 0.0;

private static void m2() throws Exception {

Thread t1 = new Thread(() -> {

for (int i = 0; i < nums.length / 2; i++) {

result1 += nums[i];

}

});

Thread t2 = new Thread(() -> {

for (int i = nums.length / 2; i < nums.length; i++) {

result2 += nums[i];

}

});

long start = System.currentTimeMillis();

t1.start();

t2.start();

t1.join();

t2.join();

result = result1 + result2;

long end = System.currentTimeMillis();

System.out.println("m2: " + (end - start) + " result = " + df.format(result));

}

//===================================================================

private static void m3() throws Exception {

final int threadCount = 10000;

Thread[] threads = new Thread[threadCount];

double[] results = new double[threadCount];

final int segmentCount = nums.length / threadCount;

CountDownLatch latch = new CountDownLatch(threadCount);

for (int i = 0; i < threadCount; i++) {

int m = i;

threads[i] = new Thread(() -> {

for (int j = m * segmentCount; j < (m + 1) * segmentCount && j < nums.length; j++) {

results[m] += nums[j];

}

latch.countDown();

});

}

double resultM3 = 0.0;

long start = System.currentTimeMillis();

for (Thread t : threads) {

t.start();

}

latch.await();

for(int i=segmentCount*threadCount;i<nums.length;i++)

{

resultM3+=nums[i];

}

for (int i = 0; i < results.length; i++) {

resultM3 += results[i];

}

long end = System.currentTimeMillis();

System.out.println("m3: " + (end - start) + " result = " + df.format(resultM3));

}

public static void main(String[] args) throws Exception {

m1();

m2();

m3();

}

}

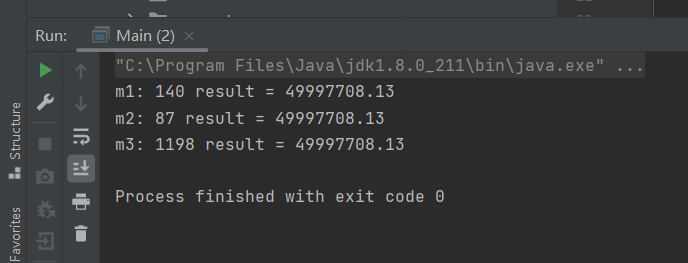

The operation results are as follows:

10000 threads are set in m3 method, but its execution time takes more than 1000 ms, which greatly reduces the efficiency.