preface

Hexo is a node based JS static blog generator. Unlike the traditional WordPress, Typecho and other dynamic blog programs rendered by the server, hexo can traverse each page of the blog, Render blog posts and other contents into topics (i.e. page templates), and generate HTML files of all Pages and their referenced static resources such as CSS and JS. These static resource files often provide external access by hosting to Pages, object storage or self built Nginx server.

Although the blog built based on Hexo eliminates the time and computational resource overhead of repeatedly rendering the same page on the server, it also moves more modules and page logic to the front-end page. Different bloggers have different functional requirements for blogs, so the optional functions of the theme are often modular, and many static resources such as JS, CSS, pictures and fonts need to be introduced. The loading and caching strategies of Hexo blog pages and their dependent static resources greatly affect the access experience of Hexo blog. Some optimization methods are described below.

Avoid blocking caused by resource loading

HTML pages often import CSS and JS files through < link rel = "stylesheet" href > and < script SRC > tags. During the loading of referenced resources, the browser's parsing and rendering of subsequent HTML content will be blocked. If resources are introduced at the head of the page and loaded too slowly, the white screen time will be significantly increased.

<link rel="stylesheet" href="/css/style/main.css"> <!-- Slow loading CSS --> <link href="https://fonts.googleapis.com/css2?family=Noto+Sans+SC&display=swap" rel="stylesheet">

My site initially directly introduced the Chinese fonts provided by Google Fonts, which requires loading a relatively large CSS, significantly delaying the time for the page to complete loading. This part of font style is not necessary for page display, so you can try to make the browser delay loading the CSS style file. The specific methods are as follows:

- Add the media attribute to the link tag with the value of only x (this value does not match the current page in the browser's media query. The browser will still load the CSS file, but will not use it, so it will not block the rendering of the page)

- Add the onload attribute to the link tag, which will be executed after the browser finishes loading CSS. There are two steps: (1) clear the onload callback to avoid repeated execution; (2) Setting the value of the media property to all will cause the browser to apply this CSS to the page.

<!-- CSS Page rendering is not blocked when loading --> <link href="https://fonts.googleapis.com/css2?family=Noto+Sans+SC&display=swap" rel="stylesheet" media="only x" onload="this.onload=null;this.media='all'">

Similarly, to avoid the blocking of page rendering caused by JS file loading, you can also optimize the page loading speed. When the script tag has defer attribute or async attribute, the loading of JS file will not block page rendering.

- defer attribute: the browser will request the JS file, but it will be delayed until the document is parsed and before the DOMContentLoaded event is triggered

- Async attribute: the browser requests the JS file with async attribute in parallel, and parses and executes it as soon as possible

Adding the defer or async attribute to the script tag depends on the function and necessity of the JS script. For example, the JS script used to count page visits can add the async attribute (it does not depend on the DOM structure and does not need to be executed immediately); the JS script used to render the comment area can add the defer attribute (it can be loaded in advance and can wait until the DOM is loaded).

Static resource version control

Caching is a key point to improve page loading speed. Repeated loading of static resource files that have been loaded will undoubtedly waste valuable time and bandwidth. The traditional caching strategy based on HTTP cache header is to judge whether the file on the server has been modified by forcing caching for a period of time, modifying time and ETag. This set of caching strategies performs poorly in the following two cases:

- During the max age of forced caching, the files on the server have changed, but the browser still uses the old files (resulting in untimely updates of static resources or inconsistencies among multiple static resources)

- When the local cache expires, the browser re requests the server, but the files on the server have not actually changed. (it takes a round trip to determine that the static resources of the local cache can be used)

A version control method for static resources is to add the hash value of the file content to the file name. For example, the original file path is CSS / style CSS, the first 8 bits of the hash value are 1234abcd, then the file path to which the hash value is added becomes CSS / style 1234abcd. css. The advantage of this is that when the file content changes, the file name must change. Conversely, when the browser has cached the file of this path, it can be inferred that the cached file has not changed on the server side. The browser can directly use the cached version without cache negotiation (by setting a long forced cache Max age).

To implement this file version control method in Hexo blog, on the one hand, modify the file name and corresponding reference path of static resources during Hexo construction, on the other hand, set a long cache time for static resources with hash value, so as to achieve effective caching.

Hexo supports extending the functions of hexo through custom JS scripts (placed in scripts / directory). We can use the hexo.extend.filter.register("after_generate", callback) hook to add, delete and modify all static files after hexo generates them, so as to realize the above operation of replacing static file names. The specific code implementation is as follows:

const hasha = require("hasha");

const minimatch = require("minimatch");

function stream2buffer(stream) {

return new Promise((resolve, reject) => {

const _buf = [];

stream.on("data", (chunk) => _buf.push(chunk));

stream.on("end", () => resolve(Buffer.concat(_buf)));

stream.on("error", (err) => reject(err));

});

}

const readFileAsBuffer = (filePath) => {

return stream2buffer(hexo.route.get(filePath));

};

const readFileAsString = async (filePath) => {

const buffer = await readFileAsBuffer(filePath);

return buffer.toString();

};

const parseFilePath = (filePath) => {

const parts = filePath.split("/");

const originalFileName = parts[parts.length - 1];

const dotPosition = originalFileName.lastIndexOf(".");

const dirname = parts.slice(0, parts.length - 1).join("/");

const basename =

dotPosition === -1

? originalFileName

: originalFileName.substring(0, dotPosition);

const extension =

dotPosition === -1 ? "" : originalFileName.substring(dotPosition);

return [dirname, basename, extension];

};

const genFilePath = (dirname, basename, extension) => {

let dirPrefix = "";

if (dirname) {

dirPrefix += dirname + "/";

}

if (extension && !extension.startsWith(".")) {

extension = "." + extension;

}

return dirPrefix + basename + extension;

};

const getRevisionedFilePath = (filePath, revision) => {

const [dirname, basename, extension] = parseFilePath(filePath);

return genFilePath(dirname, `${basename}.${revision}`, extension);

};

const revisioned = (filePath) => {

return getRevisionedFilePath(filePath, `!!revision:${filePath}!!`);

};

hexo.extend.helper.register("revisioned", revisioned);

hexo.extend.filter.register("stylus:renderer", function (style) {

style.define("revisioned", (node) => {

return new style.nodes.String(revisioned(node.val));

});

});

const calcFileHash = async (filePath) => {

const buffer = await stream2buffer(hexo.route.get(filePath));

return hasha(buffer, { algorithm: "sha1" }).substring(0, 8);

};

async function replaceRevisionPlaceholder() {

const options = hexo.config.new_revision || {};

const include = options.include || [];

const enable = !!options.enable || false;

if (!enable) {

return false;

}

const hashPromiseMap = {};

const hashMap = {};

const doHash = (filePath) =>

calcFileHash(filePath).then((hash) => {

hashMap[filePath] = hash;

});

await Promise.all(

hexo.route.list().map(async (path) => {

const [, , extension] = parseFilePath(path);

if (![".css", ".js", ".html"].includes(extension)) {

return;

}

let fileContent = await readFileAsString(path);

const regexp = /\.!!revision:([^\)]+?)!!/g;

const matchResult = [...fileContent.matchAll(regexp)];

if (matchResult.length) {

const hashTaskList = [];

// Get file hash asynchronously

matchResult.forEach((group) => {

const filePath = group[1];

if (!(filePath in hashPromiseMap)) {

hashPromiseMap[filePath] = doHash(filePath);

}

hashTaskList.push(hashPromiseMap[filePath]);

});

// Wait for all hash es to complete

await Promise.all(hashTaskList);

// Replace placeholder

fileContent = fileContent.replace(regexp, function (match, filePath) {

if (!(filePath in hashMap)) {

throw new Error("file hash not computed");

}

return "." + hashMap[filePath];

});

hexo.route.set(path, fileContent);

}

})

);

await Promise.all(

hexo.route.list().map(async (path) => {

for (let i = 0, len = include.length; i < len; i++) {

if (minimatch(path, include[i])) {

return doHash(path);

}

}

})

);

await Promise.all(

Object.keys(hashMap).map(async (filePath) => {

hexo.route.set(

getRevisionedFilePath(filePath, hashMap[filePath]),

await readFileAsBuffer(filePath)

);

hexo.route.remove(filePath);

})

);

}

hexo.extend.filter.register("after_generate", replaceRevisionPlaceholder);On demand loading based on IntersectionObserver

Some JS scripts for content rendering in Hexo blog do not have to be executed immediately when the page is loaded (such as JS for rendering comment area). In addition to avoiding blocking page rendering through the above methods, you can also start loading just before visitors see it, that is, on-demand loading. This requires the IntersectionObserver API.

Before calling the IntersectionObserver API, first deal with the compatibility problem to avoid that the browser does not support the IntersectionObserver API and the page content is not displayed. Then create an IntersectionObserver to listen for events that appear in the viewport. When the element is seen by the visitor, the corresponding JS is loaded and executed. The following is the implementation of the code:

function loadComment() {

// Insert script tag

}

if ('IntersectionObserver' in window) {

const observer = new IntersectionObserver(function (entries) {

// This callback is triggered when the browser viewport intersects with the listening element

if (entries[0].isIntersecting) {

// Trigger JS loading

loadComment();

// Cancel listening to avoid repeatedly triggering this callback

observer.disconnect();

}

}, {

// The threshold value for callback triggering. Here, 10% of the callbacks will be triggered when they appear on the screen

threshold: [0.1],

});

observer.observe(document.getElementById('comment'));

} else {

// The browser does not support IntersectionObserver. JS loading will be triggered immediately

loadComment();

}Font clipping

As mentioned earlier, my blog has introduced fonts through Google Fonts, The Chinese font Noto Serif SC is specifically introduced (Siyuan Song typeface) for the display of Title fonts. Here we will first explain how Google Fonts provides online fonts with large character sets such as Chinese. It is unrealistic if we distribute Chinese fonts to visitors through a complete font file, because a complete Chinese font includes thousands or even tens of thousands of characters, that is, the size of the font file is at least MB Level, it takes a long time to download a font file completely. However, when the browser supports the Unicode range configuration of font family, this problem will take a turn for the better.

The introduction of Unicode range allows us to instruct browsers to use specific fonts only for specific characters. For example, the following style instructs the browser: the font family of MyLogo only works for the words "electricity" (U+7535), "brain" (U+8111), "Star" (U+661F) and "human" (U+4EBA). Google Fonts divides the font into multiple files, and the browser downloads the corresponding font files as needed when rendering the page, rather than downloading all the font files.

@font-face {

font-family: 'Noto Serif SC';

font-style: normal;

font-weight: 400;

font-display: swap;

src: url("./font/logo.woff2") format("woff2");

unicode-range: U+7535,U+8111,U+661F,U+4EBA;

}Unfortunately, in the font file provided by Google Fonts, the four big characters of my home page title are just distributed on the four font files. So is there any way to put them together? Yes, code:

const fontCarrier = require('font-carrier');

const transFont = fontCarrier.transfer('./NotoSerifSC-Regular.otf');

transFont.min('Computer star man');

transFont.output({

path: './logo'

});The font carrier library is used here. We can only cut out the text needed for the page from the complete font file to generate a subset of the font, so as to optimize the font loading and display experience. (at present, it is only used on the page title of the blog, and we have not pulled all the article titles to generate the font file of the article page title for the time being.)

Preload next page

Finally, let's talk about a more "clever" method. The previous optimization means are aimed at the optimization of single page access, but visitors visiting a site is often a continuous process, that is, if a visitor is interested in the content of the website after entering the home page, he is likely to continue to visit the inner page of the website through the hyperlink on the page. The loading speed of static resources such as CSS and JS has been optimized through caching, so does the HTML static file loading of Hexo blog also have room for optimization? The answer to this question is yes.

quicklink is used here( https://github.com/GoogleChromeLabs/quicklink ), its implementation principle is as follows:

- Listen for < a > tags that appear in browser viewports through IntersectionObserver

- Wait for the browser to idle (register the callback through requestIdleCallback)

- Insert the < link rel = "prefetch" href = "a" URL pointed to by the tag > (this instructs the browser to request the URL, thereby caching the resources pointed to by the URL)

In this way, before visitors click the hyperlink to jump to the inner page of the blog, the HTML, CSS and JS files of this page should have been cached in the browser. The network request time overhead during page Jump is greatly reduced, which further speeds up the loading speed of the next page.

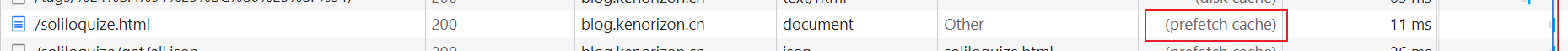

prefetch preloads the next page:

prefetch cache is used during page Jump: